You've seen this pattern before. Maybe not with AI. But it's identical.

You built your lead generation on Facebook organic reach. Spent months creating content, building an audience, dialing in what worked. Then the algorithm changed. Overnight. Organic reach dropped to single digits. Everything you built on their platform, subject to their rules.

Or maybe it was Google. You ranked on page 1 for your best keywords. Leads were flowing. Then an update rolled through and your traffic disappeared. Not because your content got worse. The platform changed how it measured value.

Or email. You built a list, nurtured it for years, and your deliverability tanked because your ESP changed their infrastructure.

The pattern is always the same. You build on someone else's foundation. They change the rules. You start over.

Right now, the same pattern is playing out with AI. Most businesses don't see it yet.

Key Takeaways:

- Anthropic's leaked roadmap reveals 3 features: self-improving agents, persistent memory, and context compression

- All 3 share the same weakness: knowledge stored in platform memory is temporary

- Businesses that store AI knowledge in permanent, owned files will benefit most when these features ship

- The best businesses have always built systems that run without the founder in the room. AI is no different. Platform features become accelerators when you own the foundation

Your AI Knowledge Is Sitting on Rented Land

If you're using AI in your business today, you're probably doing something smart with it. You've set up Projects in Claude. Maybe you've written custom instructions in ChatGPT. You've built workflows. Maybe trained your team on how to prompt effectively.

Here's the question nobody's asking: where does all of that knowledge actually live?

For most businesses, the answer is inside the platform.

Your corrections live in conversation history. Brand context lives in a Project file the AI reads once at the start of each session. Your preferences are scattered across threads that get compressed or forgotten the moment a conversation runs long.

It works. Today.

But it's rented land. And the landlord is about to renovate.

What Anthropic's Leaked Roadmap Actually Revealed

On March 31, 2026, Anthropic accidentally published the full source code of Claude Code to the public npm registry. Not a teaser. Not a blog post. The entire codebase. Nearly 2,000 files. 500,000 lines of code.

Buried inside were 3 features that haven't shipped yet. Features that reveal exactly where AI platforms are heading. What they reveal matters for anyone building AI into their business.

1. Your AI Will Soon Improve Itself While You Sleep

Picture this. You close your laptop for the day. While you're away, your AI reviews every output it produced. It finds patterns in the mistakes it made. It drafts updated instructions for itself based on what it learned. When you sit down the next morning, the AI is sharper than it was yesterday.

Anthropic is building this into the platform natively. Scheduled background agents running on automatic cycles. Improving without you being there.

There's a principle in business that applies perfectly here. The businesses that last are the ones that build systems capable of running without the founder in the room. Not because the founder doesn't matter. Because the founder built something that holds its shape when they step away. The knowledge lives in the system, not in the person's head.

That's exactly what self-improving AI agents are. Systems that get better on their own. But only if the knowledge is somewhere the system can reach it.

If your corrections, your context, and your improvement logic are stored in structured files the background agent can read, it has everything it needs. It gets smarter overnight. Automatically.

If that data lives inside conversation history that gets compressed after a few sessions? The agent has nothing meaningful to work with. It spins in circles. Like an employee who has to ask the owner the same question every morning because nobody wrote it down.

The difference is where your knowledge lives. Not whether you have it.

(I tested this. Built a version that already runs a weekly improvement loop off stored corrections. When the leak surfaced, nothing needed to change. The architecture already fits.)

2. AI Memory Across Conversations Is Coming. But It's Fragile.

Even if you're using Projects or custom instructions to give your AI context, it still doesn't learn from working with you. It reloads the same static instructions every time. The corrections you made Tuesday? The preferences you taught it last week? They don't carry forward. The AI isn't getting smarter between sessions. It's resetting.

Anthropic's fix is a background process that consolidates what the AI learned across multiple sessions and carries it forward into the next one. You can read about their approach to persistent context and caching in their documentation.

Smart concept. 1 problem though.

That consolidated memory gets stored inside the AI's temporary working space. The same space that gets compressed when conversations run long. If compression fires and something gets lost, there's no backup. No file to reference. No recovery.

Here's your safeguard. Store every correction, every brand guideline, every piece of business context in permanent files on your machine. Indexed so the AI can search them. Permanent so they can't disappear.

When the platform memory feature ships, it becomes an extra layer on top of what you already have. If their memory loses a correction during a long conversation, your files still have it. On disk. Always available. Always complete.

The platform becomes a cache. Your files stay the source of truth.

3. Long AI Conversations Can Silently Scramble Your Instructions

This is the 1 that should concern you most.

When a conversation gets too long, the AI has to compress older messages to make room for new ones. It runs a summarization process that condenses everything into a shorter version. The problem: that summarizer doesn't know the difference between a casual back-and-forth message and the critical rules you set at the start.

Your AI can forget its own instructions mid-conversation. No error message. No warning. Just lower quality work because the rules got compressed into a summary that lost the details.

If your AI's instructions only exist in the conversation context, there's nothing to fall back on.

The fix is simple. Store your AI's core instructions in a source file. Before any critical output, have it re-read from the original. Not from memory. Not from a compressed summary. From the file. The conversation can compress all it wants. The instructions stay fresh.

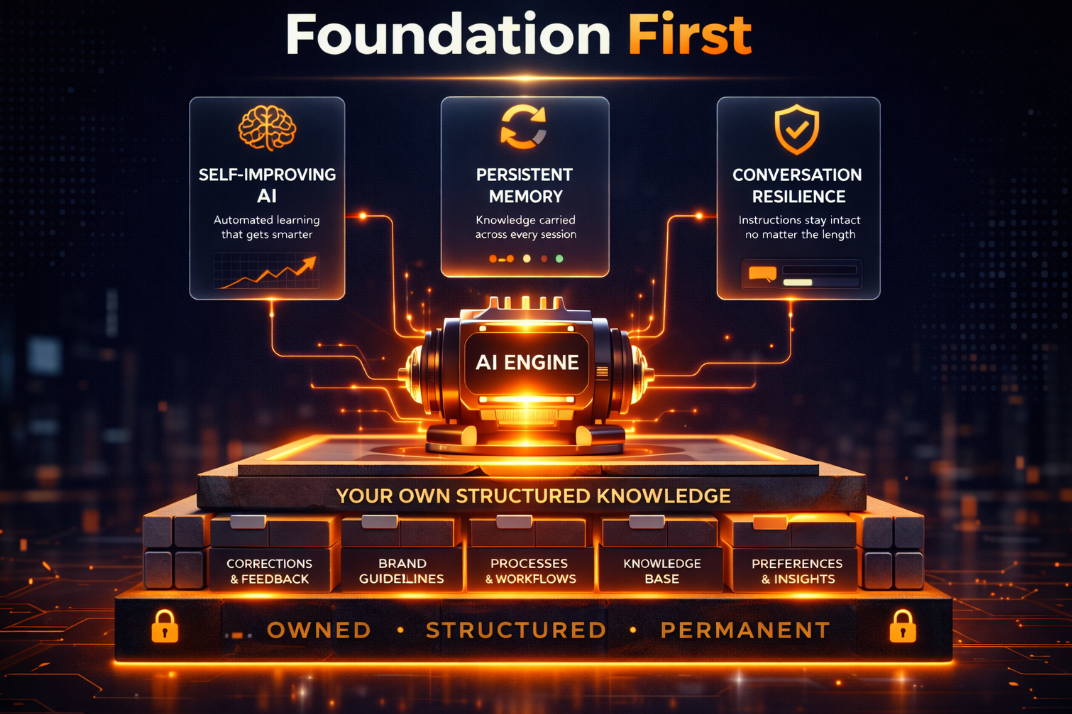

Why the Best Businesses Already Know This Principle

Every one of these 3 features has the same vulnerability. Knowledge stored inside the platform's temporary memory is temporary.

It can be compressed. Lost during consolidation. Overwritten by an update you didn't ask for.

Michael Gerber wrote about this decades ago in The E-Myth Revisited. Most businesses fail because everything depends on the owner showing up. The owner's memory. The owner's judgment calls. The owner's presence in the room. The business doesn't have systems. It has a person. And when that person is unavailable, the whole thing stalls.

Gerber's fix: build systems that hold the knowledge independently. Document the process. Make the business run on structure, not on whoever happens to be available.

AI has the exact same problem right now.

Most businesses using AI are the “technician” in Gerber's framework. All the intelligence lives in the conversation. In the owner's prompts. In platform memory that can vanish. Nothing is documented in a place the system can access on its own.

The businesses that will get the most out of these features when they ship are the ones that already have their AI knowledge stored somewhere permanent. Files on their machine. Structured, indexed, and searchable. Not inside a conversation thread. Not inside a Project that gets compressed after a long session.

When background agents ship, they need structured data to work with. Permanent files give them that.

When persistent memory ships, it becomes an extra layer on top of your permanent records. If the platform loses something, your files still have it.

When compression happens in long sessions, your AI re-reads its instructions from the source. Not from a summary of a summary. From the original.

The platform features become accelerators. Not the foundation.

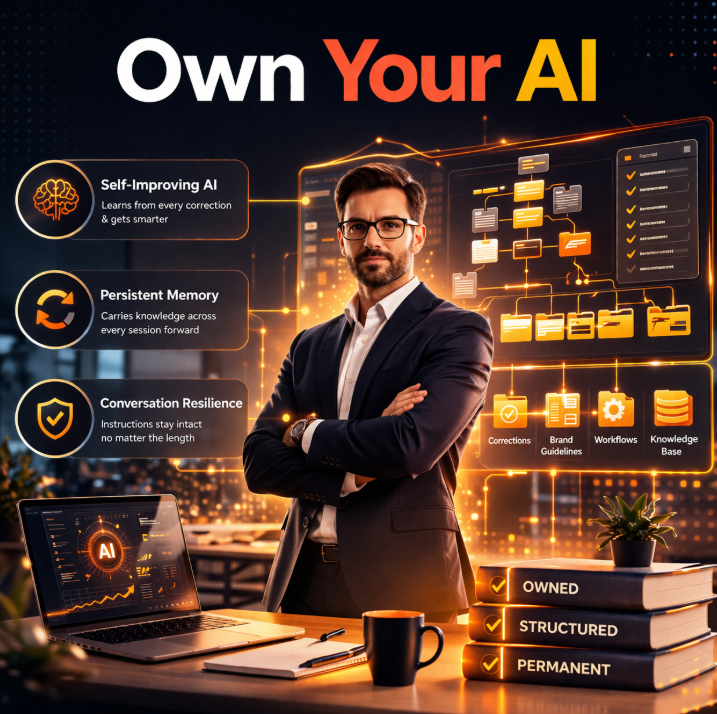

Own Your AI the Way You'd Build a Business That Runs Without You

Go back to the pattern from the beginning. Facebook. Google. Email platforms. The businesses that survived every shift had 1 thing in common. They owned their core infrastructure. Their customer data, their content, their processes. The platforms were distribution channels. Not foundations.

The same principle applies to AI.

If your AI knowledge, your corrections, your brand context, and your business logic all live inside the platform, you're 1 update away from starting over. If they live in files you own, structured and portable, every platform upgrade makes your system faster. Not obsolete.

The leaked roadmap didn't reveal a threat. It revealed a confirmation.

Build systems that hold their knowledge independently. The platforms will catch up. And when they do, your AI will already know what to do with the new features. Because the foundation was yours from the start.

Want to see where AI can eliminate your biggest bottleneck? Take a free Hiring Tax Diagnostic and we'll map exactly where you're bleeding time and money.

Frequently Asked Questions

What was in the Claude Code leak?

On March 31, 2026, Anthropic accidentally published Claude Code's full source code to the npm registry. Nearly 2,000 files. 500,000 lines. Inside were 3 unreleased features: background AI agents that self-improve on automatic cycles, persistent memory that carries across conversations, and context compression that can silently scramble your instructions during long sessions.

How do I protect my business AI from platform changes?

Store your corrections, brand guidelines, and business context in structured files you own. Not in conversation threads. Not in platform memory. On your machine, indexed so the AI can search them. Think of it like Gerber's principle from The E-Myth Revisited: the knowledge needs to live in the system, not in somebody's head. When platform features like persistent memory ship, they layer on top of what you already own. Your files are the source of truth. Platform memory is just a cache.

Can AI context compression lose my instructions?

Yes. And it won't tell you it happened. When conversations get long, the AI compresses older messages to make room. That summarization can't tell the difference between a throwaway message and a critical rule you set at the start. The fix: store your core instructions in a file and have the AI re-read from the source before any important output.