Think about the last person you hired.

First week, they needed everything spelled out. Where to find the files. How to format the report. Which clients get the detailed invoice and which ones get the summary. You corrected them constantly. That's normal.

By month 2, the corrections dropped. They remembered. By month 6, they were anticipating what you needed before you asked. Every correction you gave them in those early weeks compounded into instinct.

That's how learning works. Correction stacks on correction. Context builds on context. The person gets sharper because they retain what you teach them.

Now think about how most businesses use AI.

Key Takeaways

- Standard “human in the loop” erases every correction after each AI session

- Compound corrections store fixes, extract patterns, and pre-load them as context for the next run

- The difference between supervising AI and shaping it determines whether your time investment compounds or resets to zero

Your AI Has Amnesia and Nobody's Talking About It

The standard “human in the loop” process looks like this. You give your AI a task. It produces a draft. You review it, fix the mistakes, approve the output. Done.

Session ends.

Here's the part that should bother you: every correction you just made? Gone. The AI doesn't carry it forward. Next time it runs, it starts from scratch. Same mistakes. Same fixes. Another 20 minutes you already spent last week.

You're not training a system. You're babysitting one.

Ray Dalio built Bridgewater into the largest hedge fund in the world on a single idea: every mistake is a learning opportunity, but only if you record it, extract the principle, and encode it into a system. He called it “radical transparency.” His team logged every error, extracted the rule behind it, and fed it back into their decision-making process. The organization got smarter from every failure because the failures were captured, not forgotten.

Most businesses running AI today do the opposite. They correct the output, close the session, and let the correction vanish. Research from MIT found that 95% of enterprise AI projects fail to deliver measurable ROI. Not because the AI is bad. Because the system around it never learns. McKinsey reports only 6% of companies qualify as “high performers” on AI where it contributes meaningfully to the bottom line.

Think about what that means for your week. If you spend 20 minutes per session correcting AI outputs and you run 5 sessions a week, that's over 80 hours a year re-teaching a system that forgets everything overnight.

Dalio wouldn't accept that from a junior analyst. You shouldn't accept it from your AI.

What Changes When Your Corrections Start Compounding

Instead of letting corrections disappear, you store them. Every fix becomes a searchable document. Every pattern gets extracted. Every preference gets loaded before the next run starts.

Here's what that looks like in practice.

I built a system where every correction gets stored in QMD (think of it as a searchable library of every fix I've ever made). Structured. Tagged. Retrievable by any AI agent on the next run. From there, Honcho (a relational memory layer) extracts the patterns automatically. It doesn't just remember “fix this sentence.” It learns the deeper rules: “He writes direct. Hates jargon. Wants the data before the opinion.”

That's Dalio's principle machine, applied to AI. Every correction becomes a principle. Every principle gets encoded. The system compounds.

Before the next agent run even starts, the system queries both layers and loads every relevant correction as context.

Here's what that means for you: your AI walks in already knowing what you taught it last time. And the time before that. And every correction since day 1.

For example, if you correct your AI 3 times about how to format client proposals, a compound system doesn't just remember those 3 fixes. It extracts the principle: “This person wants proposals formatted with the pricing summary first, deliverables second, timeline last.” Next time, the AI formats it correctly without being told.

That's not a correction anymore. That's an investment. And it pays dividends on every run after it.

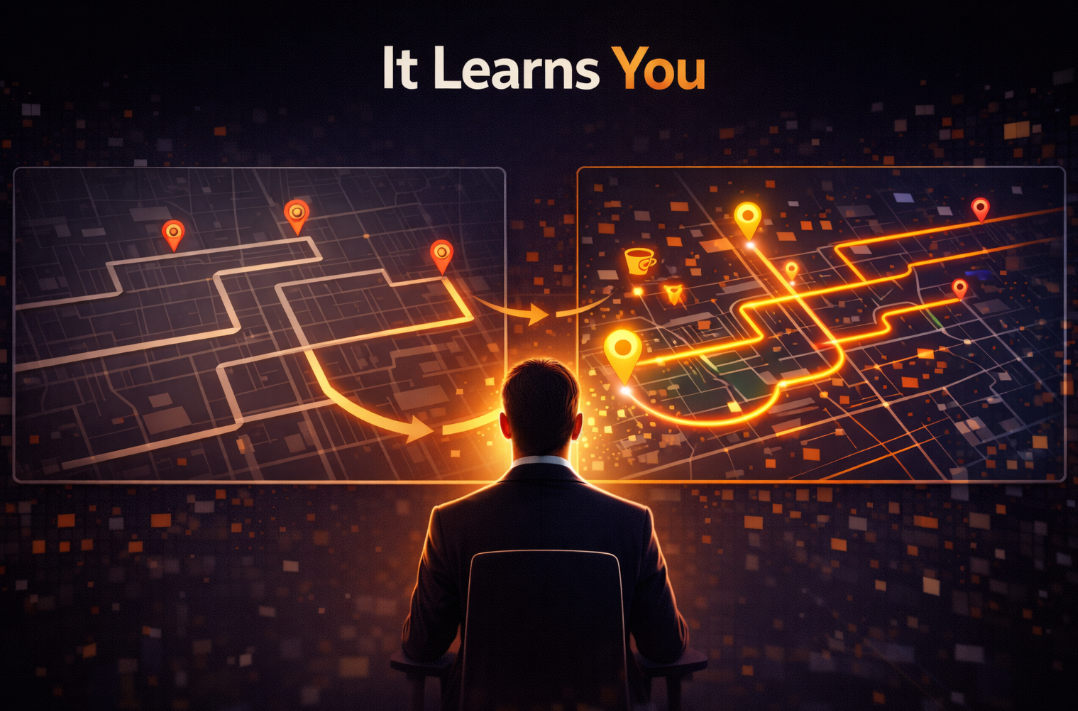

The GPS That Learns Your Routes

Think GPS vs. paper map.

A paper map gives you directions. It works. You could use it 500 times and it would still give you the same route. It would never learn that you hate the motorway, always turn early at that intersection, or stop for coffee on Thursdays.

A GPS that learns your routes is different. After a few weeks, it skips the intersection where you always turn early. Knows your coffee stop. After 6 months, it's routing you places you didn't even ask for yet.

Because it understood where you were headed.

Your corrections are the trips. The memory layer is the GPS building a model of how you think, what you value, and where you're going.

Traditional AI corrections are the paper map. You do the work every single time. Compound corrections are the GPS. Every trip sharpens the model. Eventually, the system finishes your sentences.

Most businesses don't see this gap. They're reviewing every output. Fixing the same mistakes on repeat. Calling it “human in the loop.”

Rather, what they actually have is human on a treadmill.

The Gap Between Supervising and Shaping

The businesses pulling ahead right now aren't the ones with better AI models or bigger budgets. They're the ones who figured out that corrections are data.

Every time you fix an AI output, you're generating a signal about how your business operates. What tone you use with clients. How you structure proposals. Which details matter and which ones don't. That signal is worth something. But only if you capture it.

So the question isn't whether you're using AI. Most service businesses are, in some form. The real question is simpler.

Is your AI learning from you, or starting over every morning?

One costs the same time forever. The other gets smarter every time you touch it. The compounding is silent. You don't notice it week to week. But 6 months in, your agents are anticipating decisions you haven't made yet. Because every correction you gave them built the model of how you operate.

That's not science fiction. That's architecture. It's the difference between running AI and building a system that runs with you.

Frequently Asked Questions

What is “human in the loop with compound interest”?

It's a system where every correction you make to an AI output gets stored, analyzed for patterns, and loaded as context before the next run. Instead of corrections disappearing after each session, they compound over time. The AI gets sharper with every interaction because it retains and builds on what you've taught it.

Do I need to be technical to build a compound correction system?

No. The technical architecture runs in the background. From your perspective, you correct AI outputs the same way you always have. The difference is that a system captures those corrections, extracts the patterns, and applies them automatically. You keep doing what you're already doing. The system does the compounding.

How long before the AI starts anticipating what I want?

Most users see noticeable improvement within 2 to 4 weeks of consistent use. By month 3, the AI handles routine decisions without prompting. By month 6, it's making connections between your preferences that you haven't explicitly stated. The more you correct, the faster it compounds.

Ready to see where AI can eliminate your biggest operational bottleneck? Take a free Hiring Tax Diagnostic and we'll map exactly where you're bleeding time and money. https://calculator.maramamarketing.com